|

Additionally, user choices and settings in the app can similarly affect perceived loudness. It is critical to understand the unique impact of device and software integration on volume ranges which varies across different devices, operating systems, and music-streaming apps. It is because different platforms may also use one-of-a-kind audio processing algorithms. The volume levels may alternate if the music-streaming app is included with the device’s operating system. In addition to licensing agreements, the quality of source recordings and the mastering techniques employed by different artists can significantly impact the perceived loudness of tracks on Apple Music and Spotify. The loudness of particular tracks can also be influenced by other elements, such as the standard of the source recordings and the producing techniques employed by the various artists. Certain tracks or versions of songs available on Apple Music might have been mastered or encoded differently, leading to a perceived increase in loudness. These platforms have different music catalogs and licensing agreements. The perceived loudness can also vary due to content differences between Apple Music and Spotify. If Apple Music has a better default quantity stage, it may provide the impact of being louder compared to Spotify. The platform developers decide this default placement and may range among services. Ensuring volume settings are normalized and consistent while evaluating the loudness between the two services is useful.Įach platform’s default volume level is the preliminary setting when playing music. However, it’s critical to notice that users can manually modify the volume stages on both systems. Simply put, Apple Music may give the impression that it is louder if its default volume settings are higher than Spotify. The default degree settings on Apple Music and Spotify can also contribute to the belief that Apple Music is louder.

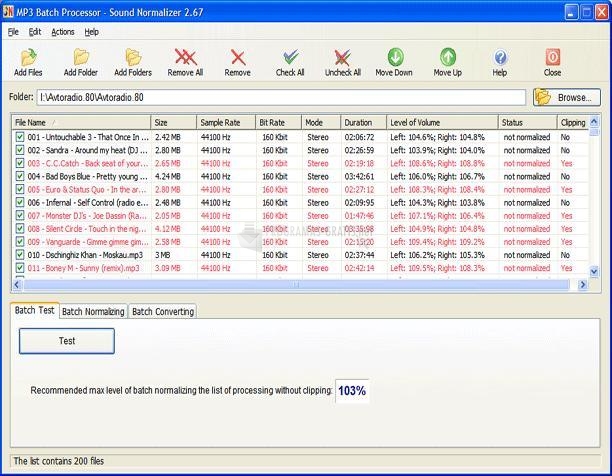

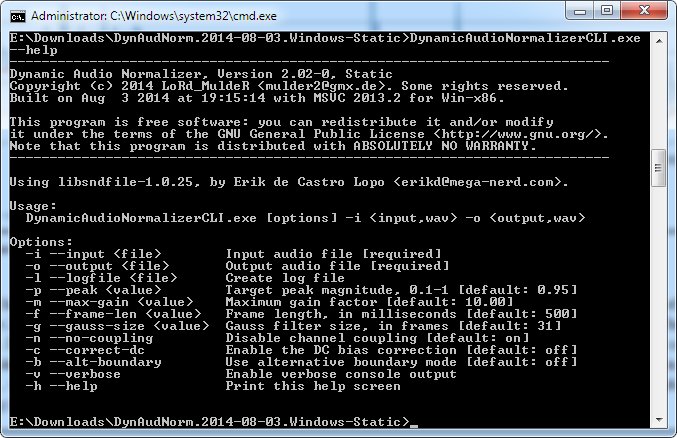

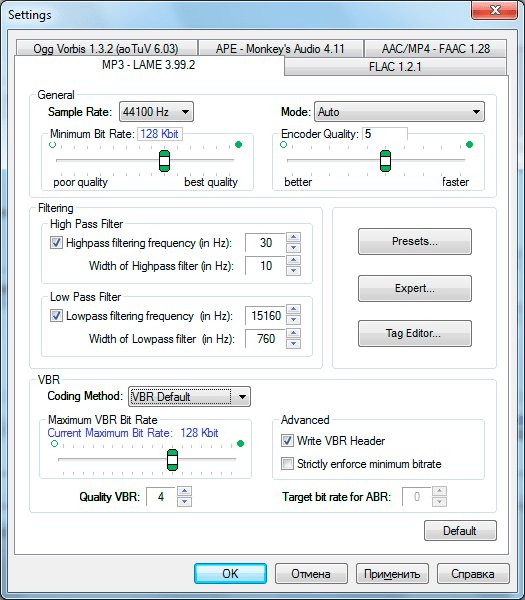

AAC’s precise set of compression rules may handle audio differently, giving the impression that the volume has increased. Instead, Apple Music uses the AAC format, which also aims to shorten document lengths while maintaining high audio quality. The Ogg Vorbis audio format, which utilizes a specific compression algorithm, is used by Spotify.Įven though this compression algorithm is designed to hold an excessive level of sound quality, it may potentially change how loud the tracks sound, making them a little softer than they did before. Because of this, Apple Music can sound louder.Īudio compression reduces the amount of space audio tracks take up in a computer’s storage without significantly diminishing the sound quality. It ensures that the songs’ volume levels stay as the artists and producers intended. It utilizes a feature known as “Mastered for iTunes,” which seeks to preserve the unique dynamic variety of the songs. On the other hand, Apple Music uses a distinct method. This approach eliminates volume variations among songs, developing a more uniform experience. Spotify employs a method known as “Loudness Normalization,” wherein all tracks are processed to have a similar loudness stage. Sound normalization is fixing the loudness of audio tracks to ensure a steady listening experience. Spotify has low audio hertz rates compared to Apple Music. Apple Music always provides better sound quality with higher audio hertz.

4 Conclusion Why is Apple Music Louder than Spotify?Īpple Music tends to sound louder than Spotify because of sound normalization, audio compression, volume level settings, and content differences.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed